The Emerging LLM Stack: A New Paradigm in Tech Architecture

As seasoned developers, we’ve witnessed the ebb and flow of numerous tech stacks. From the meteoric rise of MERN to the gradual decline of AngularJS, and the innovative emergence of Jamstack, tech stacks have been the backbone of web development evolution.

In this blog, we will walk through the modern LLM Stack - an emerging tech stack that is changing how developers build and scale Large Language Model (LLM) applications.

Before you continue, please first read the companion blog on The Evolution of LLM Architecture.

The Evolution of LLM Applications

2023 marked the rise of LLMs and their applications, with Retrieval Augmented Generation (RAG) emerging as the most popular use case. RAG involves using data retrieved from vector databases as context for LLMs to generate human-readable responses.

As we progress through 2024, we’re witnessing advancements in implementation strategies. Key trends include:

- Increased focus on production-ready LLM applications

- Growing emphasis on observability and data versioning

- Introduction of enterprise features to foundational components

Why a New Stack for LLM Applications?

LLM applications, powered by pre-trained models, are deceptively simple to kickstart, but scaling them unveils unique challenges in machine learning and data processing:

- Platform Limitations: Traditional stacks struggle with LLM app demands, especially when dealing with real-time data pipelines.

- Tooling Gaps: Existing tools often fall short in managing LLM-specific workflows, including prompt engineering and efficient API calls.

- Observability Hurdles: Monitoring LLM performance requires specialized solutions for tracking embedding models and external API usage.

- Security Concerns: LLMs introduce new vectors for data breaches and prompt injections, necessitating robust data processing protocols.

For a real-world example of an LLM application’s journey from MVP to a scalable, cost-effective product, check out our companion blog post.

You might be interested in:

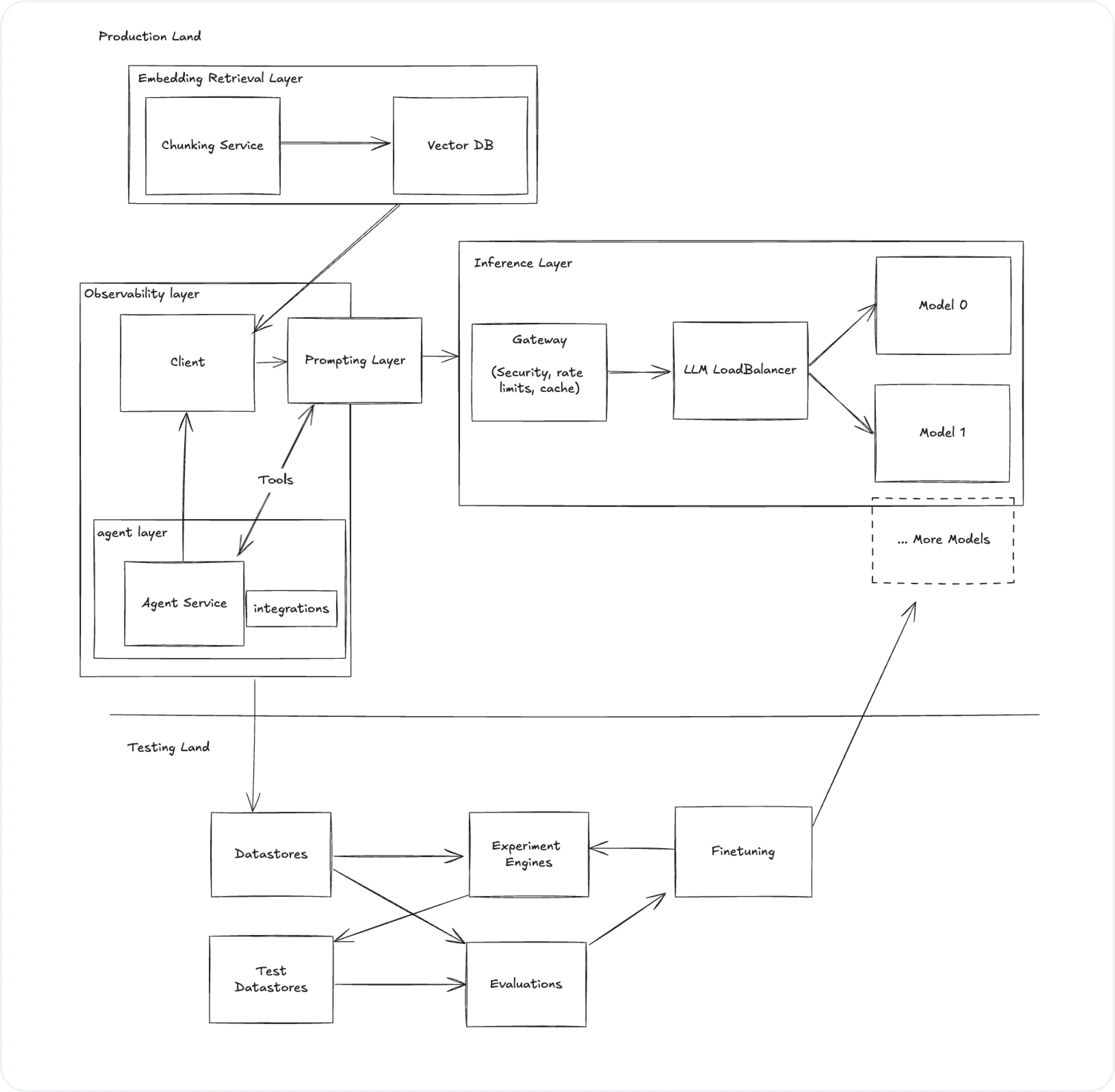

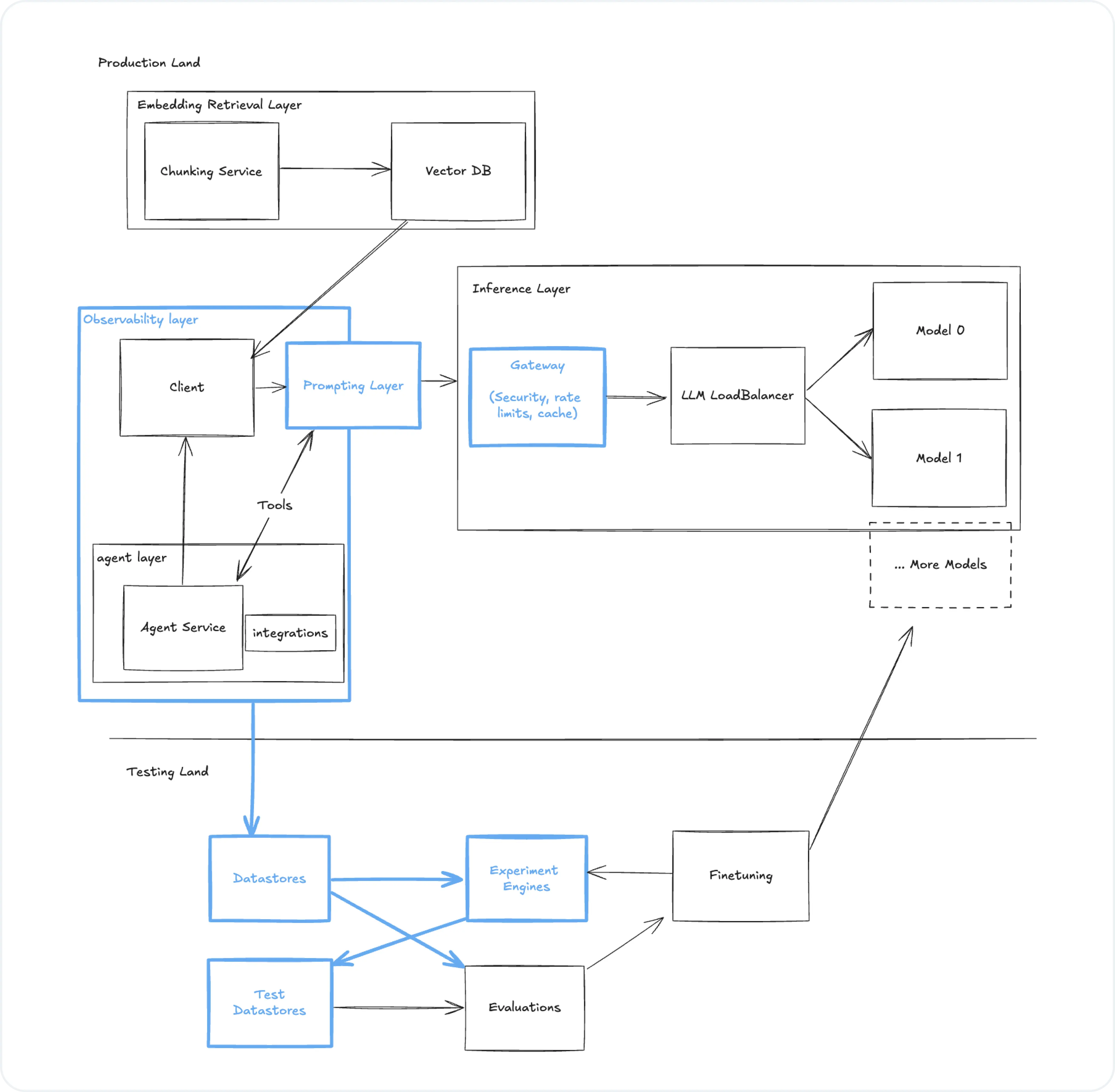

Anatomy of the LLM Stack

Rather than prescribing a specific stack choice, this blog will outline the key components for building a robust LLM stack. We’ll explore how these components work together to create powerful AI applications that enhance user experience.

Let’s dissect the architecture of the LLM stack mentioned in our companion blog post:

- Observability Layer

- Inference Layer

- Testing & Experimentation Layer

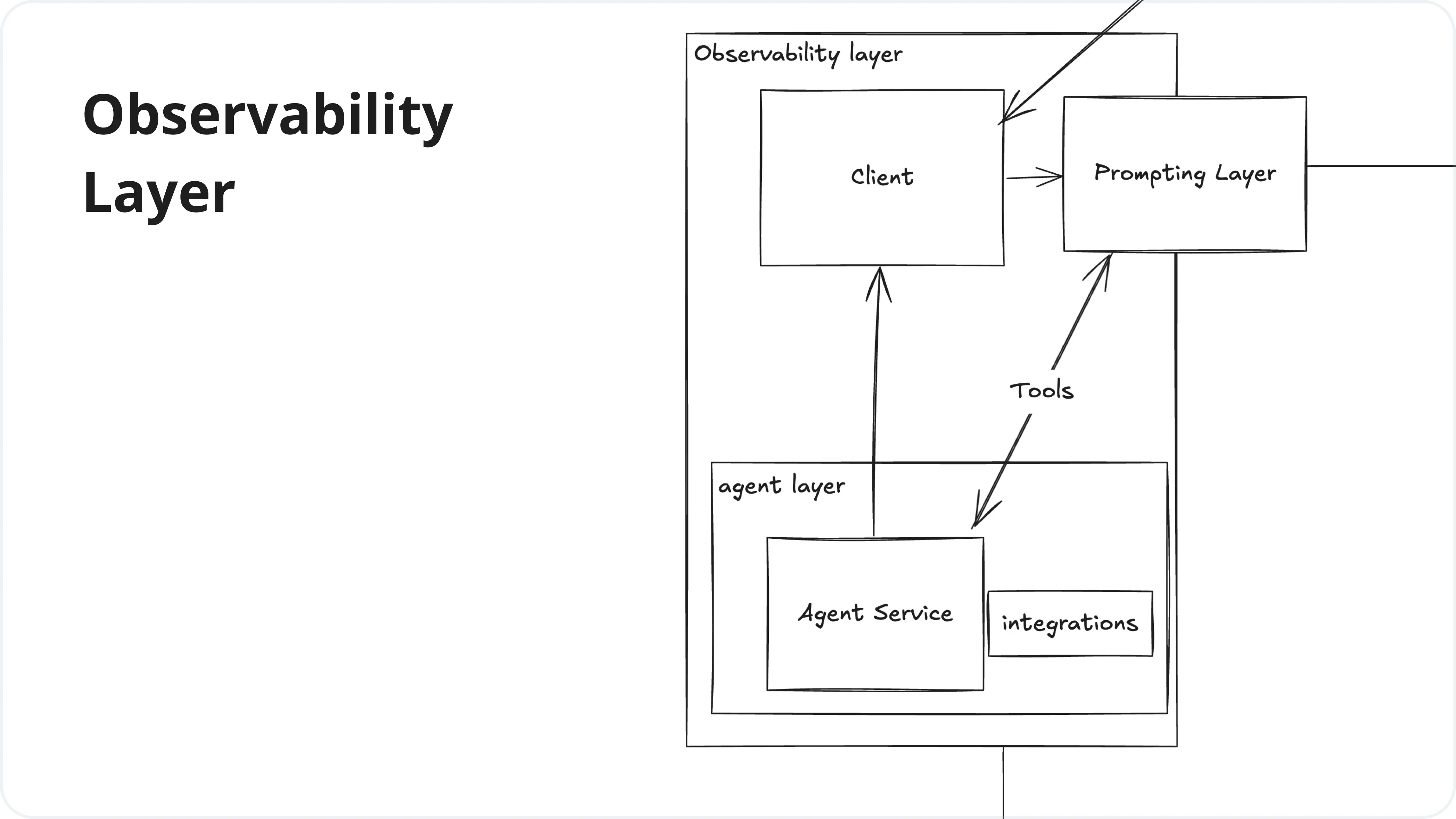

1. Observability Layer

This layer focuses on monitoring, tracking, and analyzing the performance and behavior of LLM applications. It provides insights into how the models are being used, their performance metrics, and helps in debugging and optimizing the system.

| Service | Tools |

|---|---|

| Observability | Helicone, LangSmith, LangFuse, Traceloop, PromptLayer, HoneyHive, AgentOps |

| Clients | LangChain, LiteLLM, LlamaIndex |

| Agents | Dify, CrewAI, AutoGen |

| Prompting Layer | Helicone Prompting, PromptLayer, PromptFoo |

| Integrations | Zapier, LlamaIndex |

You might be interested:

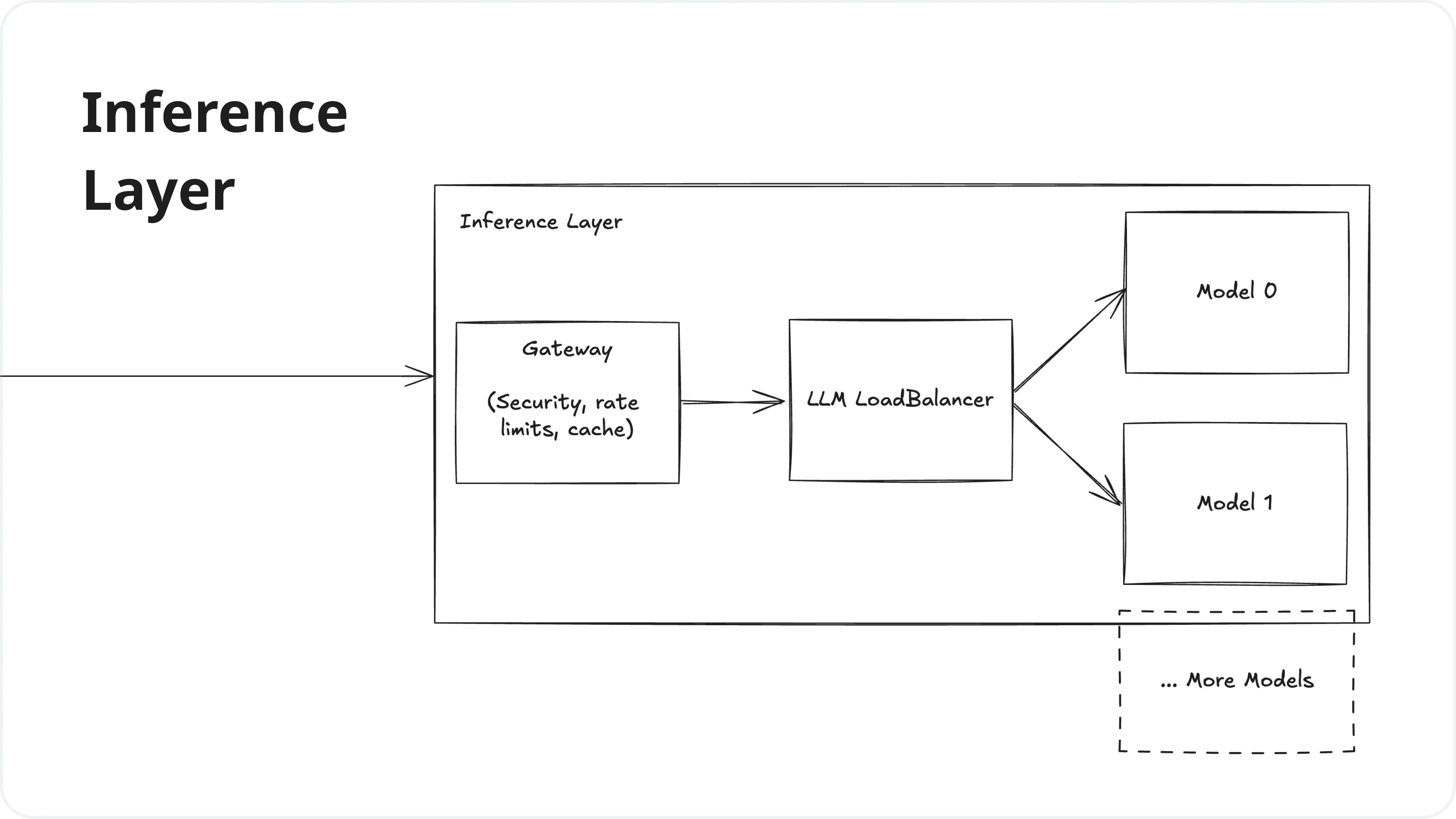

2. Inference Layer

The Inference Layer is responsible for processing requests to the LLM, managing model deployments, and load balancing. It handles the actual execution of the language models and ensures efficient and scalable access to these models.

| Service | Tools |

|---|---|

| Gateway | Helicone Gateway, Cloudflare AI, PortKey, KeywordsAI |

| LLM Load Balancer | Martian, LiteLLM |

| Model Providers | OpenAI (GPT), Meta (Llama), Google (Gemini), Mistral, Anthropic (Claude), TogetherAI, Anyscale, Groq, Amazon (Bedrock) |

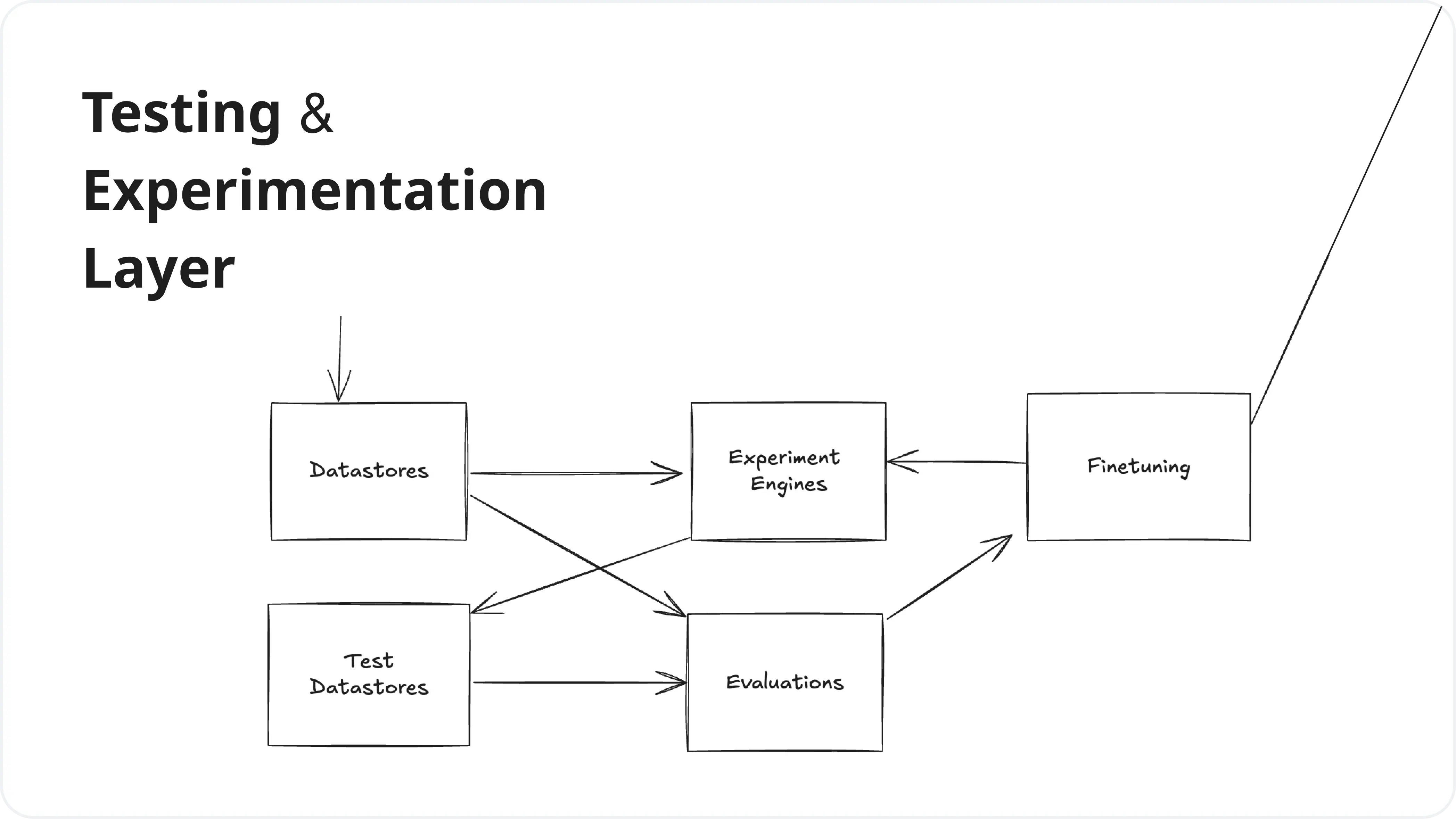

3. Testing & Experimentation Layer

This layer enables developers to test, evaluate, and experiment with different prompts, models, and configurations. It provides tools for fine-tuning models, running experiments, and assessing the quality and performance of LLM outputs.

| Service | Tools |

|---|---|

| Datastores | Helicone API, LangFuse, LangSmith |

| Experimentation | Helicone Experiments, Promptfoo, Braintrust |

| Evaluators | PromptFoo, Lastmile, BaseRun |

| Fine Tuning | OpenPipe, Autonomi |

Where Helicone Fit Into Your LLM Stack

Helicone isn’t just another tool in the LLM stack - it’s a game-changer for building AI applications. Our primary focus areas, Gateway and Observability, are crucial for building robust, scalable LLM applications. We offer:

- Efficient LLM Cache: Optimize your API calls and reduce costs.

- Real-Time Monitoring: Track your embedding models and external API usage.

- Orchestration Framework: Streamline your data processing and machine learning workflows.

The Future of LLM Applications

As we look ahead, several trends are shaping the future of LLM applications:

- Increased focus on specialized, domain-specific models

- Enhanced privacy and security measures for LLM deployments

- Improved integration between LLMs and traditional software stacks

- Advancements in multimodal AI capabilities

The LLM Stack is revolutionizing AI application development, enabling more robust and scalable solutions. As LLM architectures evolve, the focus is on creating cost-effective, powerful AI systems that expand the frontiers of machine learning and artificial intelligence. This new paradigm empowers developers to meet the growing demands of our AI-driven world with increased efficiency and innovation.